scrapy-splash简单使用详解

这篇文章主要介绍了scrapy-splash简单使用详解,文中通过示例代码介绍的非常详细,对大家的学习或者工作具有一定的参考学习价值,需要的朋友们下面随着小编来一起学习学习吧

1.scrapy_splash是scrapy的一个组件

scrapy_splash加载js数据基于Splash来实现的

Splash是一个Javascrapy渲染服务,它是一个实现HTTP API的轻量级浏览器,Splash是用Python和Lua语言实现的,基于Twisted和QT等模块构建

使用scrapy-splash最终拿到的response相当于是在浏览器全部渲染完成以后的网页源代码

2.scrapy_splash的作用

scrpay_splash能够模拟浏览器加载js,并返回js运行后的数据

3.scrapy_splash的环境安装

3.1 使用splash的docker镜像

docker info 查看docker信息

docker images 查看所有镜像

docker pull scrapinghub/splash 安装scrapinghub/splash

docker run -p 8050:8050 scrapinghub/splash & 指定8050端口运行

3.2.pip install scrapy-splash

3.3.scrapy 配置:

1 2 3 4 5 6 7 8 9 10 11 | DOWNLOADER_MIDDLEWARES = { 'scrapy_splash.SplashCookiesMiddleware': 723, 'scrapy_splash.SplashMiddleware': 725, 'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware': 810,}SPIDER_MIDDLEWARES = { 'scrapy_splash.SplashDeduplicateArgsMiddleware': 100,}DUPEFILTER_CLASS = 'scrapy_splash.SplashAwareDupeFilter'HTTPCACHE_STORAGE = 'scrapy_splash.SplashAwareFSCacheStorage' |

3.4.scrapy 使用

1 2 | from scrapy_splash import SplashRequestyield SplashRequest(self.start_urls[0], callback=self.parse, args={'wait': 0.5}) |

4.测试代码:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 | import datetimeimport os import scrapyfrom scrapy_splash import SplashRequest from ..settings import LOG_DIR class SplashSpider(scrapy.Spider): name = 'splash' allowed_domains = ['biqugedu.com'] custom_settings = { 'LOG_FILE': os.path.join(LOG_DIR, '%s_%s.log' % (name, datetime.date.today().strftime('%Y-%m-%d'))), 'LOG_LEVEL': 'INFO', 'CONCURRENT_REQUESTS': 8, 'AUTOTHROTTLE_ENABLED': True, 'AUTOTHROTTLE_TARGET_CONCURRENCY': 8, 'DOWNLOADER_MIDDLEWARES': { 'scrapy_splash.SplashCookiesMiddleware': 723, 'scrapy_splash.SplashMiddleware': 725, 'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware': 810, }, 'SPIDER_MIDDLEWARES': { 'scrapy_splash.SplashDeduplicateArgsMiddleware': 100, }, 'DUPEFILTER_CLASS': 'scrapy_splash.SplashAwareDupeFilter', 'HTTPCACHE_STORAGE': 'scrapy_splash.SplashAwareFSCacheStorage', } def start_requests(self): yield SplashRequest(self.start_urls[0], callback=self.parse, args={'wait': 0.5}) def parse(self, response): """ :param response: :return: """ response_str = response.body.decode('utf-8', 'ignore') self.logger.info(response_str) |

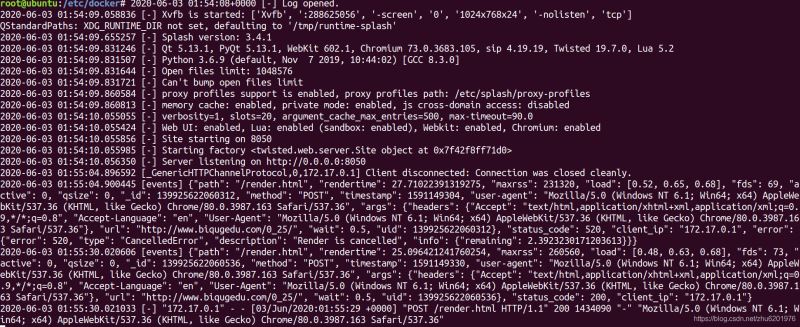

scrapy-splash接收到js请求:

到此这篇关于scrapy-splash简单使用详解的文章就介绍到这了