iOS完整文件拉流解析解码同步渲染音视频流

iOS完整文件拉流解析解码同步渲染音视频流

实现原理

阅读前提

音视频基础

iOS FFmpeg环境搭建

FFmpeg解析视频数据

VideoToolbox实现视频硬解码

Audio Converter音频解码

FFmpeg音频解码

FFmpeg视频解码

OpenGL渲染视频数据

H.264,H.265码流结构

传输音频数据队列实现

Audio Queue 播放器

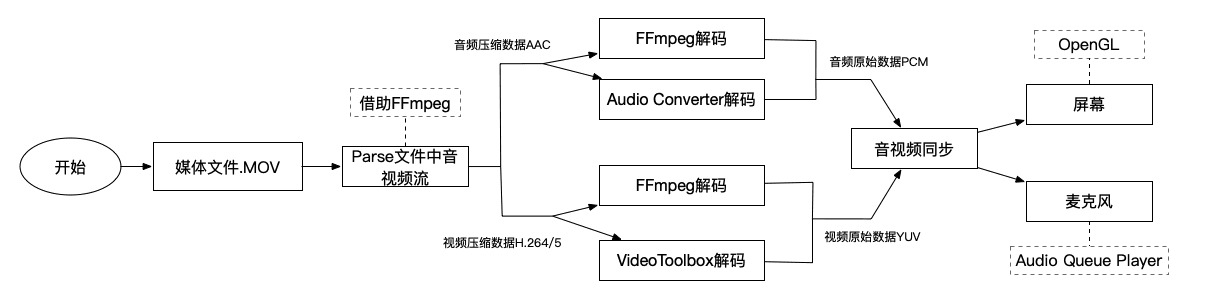

总体架构

简易流程

创建

AVFormatContext上下文对象:AVFormatContext *avformat_alloc_context(void);从文件中获取上下文对象并赋值给指定对象:

int avformat_open_input(AVFormatContext **ps, const char *url, AVInputFormat *fmt, AVDictionary **options)读取文件中的流信息:

int avformat_find_stream_info(AVFormatContext *ic, AVDictionary **options);获取文件中音视频流:

m_formatContext->streams[audio/video index];开始parse以获取文件中视频帧:

int av_read_frame(AVFormatContext *s, AVPacket *pkt);如果是视频帧通过

av_bitstream_filter_filter生成sps、pps等关键信息。读取到的

AVPacket即包含文件中所有的音视频压缩数据。

获取文件流的解码器上下文:

formatContext->streams[a/v index]->codec;通过解码器上下文找到解码器:

AVCodec *avcodec_find_decoder(enum AVCodecID id);打开解码器:

int avcodec_open2(AVCodecContext *avctx, const AVCodec *codec, AVDictionary **options);将文件中音视频数据发送给解码器:

int avcodec_send_packet(AVCodecContext *avctx, const AVPacket *avpkt);循环接收解码后的音视频数据:

int avcodec_receive_frame(AVCodecContext *avctx, AVFrame *frame);如果是音频数据可能需要重新采样以便转成设备支持的格式播放(借助

SwrContext)。

将从FFmpeg中parse到的extra data中分离提取中NALU头关键信息sps、pps等

通过上面提取的关键信息创建视频描述信息:

CMVideoFormatDescriptionRef,CMVideoFormatDescriptionCreateFromH264ParameterSets / CMVideoFormatDescriptionCreateFromHEVCParameterSets创建解码器:

VTDecompressionSessionCreate,并指定一系列相关参数。将压缩数据放入CMBlockBufferRef中:

CMBlockBufferCreateWithMemoryBlock开始解码:

VTDecompressionSessionDecodeFrame在回调中接收解码后的视频数据

通过原始数据与解码后数据格式的ASBD结构体创建解码器:

AudioConverterNewSpecific指定解码器类型

AudioClassDescription开始解码:

AudioConverterFillComplexBuffer注意: 解码的前提是每次需要有1024个采样点才能完成一次解码操作。

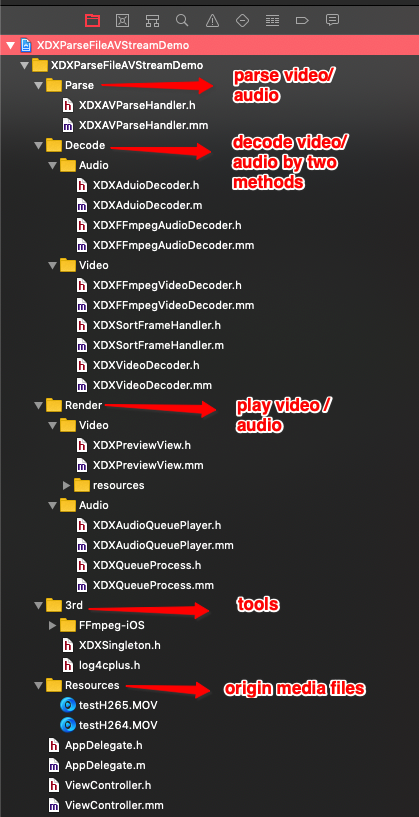

文件结构

快速使用

- (void)startRenderAVByFFmpegWithFileName:(NSString *)fileName {NSString *path = [[NSBundle mainBundle] pathForResource:fileName ofType:@"MOV"];XDXAVParseHandler *parseHandler = [[XDXAVParseHandler alloc] initWithPath:path];XDXFFmpegVideoDecoder *videoDecoder = [[XDXFFmpegVideoDecoder alloc] initWithFormatContext:[parseHandler getFormatContext] videoStreamIndex:[parseHandler getVideoStreamIndex]];videoDecoder.delegate = self;XDXFFmpegAudioDecoder *audioDecoder = [[XDXFFmpegAudioDecoder alloc] initWithFormatContext:[parseHandler getFormatContext] audioStreamIndex:[parseHandler getAudioStreamIndex]];audioDecoder.delegate = self;static BOOL isFindIDR = NO;[parseHandler startParseGetAVPackeWithCompletionHandler:^(BOOL isVideoFrame, BOOL isFinish, AVPacket packet) {if (isFinish) {isFindIDR = NO;[videoDecoder stopDecoder];[audioDecoder stopDecoder];dispatch_async(dispatch_get_main_queue(), ^{self.startWorkBtn.hidden = NO;});return;}if (isVideoFrame) { // Videoif (packet.flags == 1 && isFindIDR == NO) {isFindIDR = YES;}if (!isFindIDR) {return;}[videoDecoder startDecodeVideoDataWithAVPacket:packet];}else { // Audio[audioDecoder startDecodeAudioDataWithAVPacket:packet];}}];}-(void)getDecodeVideoDataByFFmpeg:(CMSampleBufferRef)sampleBuffer {CVPixelBufferRef pix = CMSampleBufferGetImageBuffer(sampleBuffer);[self.previewView displayPixelBuffer:pix];}- (void)getDecodeAudioDataByFFmpeg:(void *)data size:(int)size pts:(int64_t)pts isFirstFrame:(BOOL)isFirstFrame {// NSLog(@"demon test - %d",size);// Put audio data from audio file into audio data queue[self addBufferToWorkQueueWithAudioData:data size:size pts:pts];// control rateusleep(14.5*1000);}- (void)startRenderAVByOriginWithFileName:(NSString *)fileName {NSString *path = [[NSBundle mainBundle] pathForResource:fileName ofType:@"MOV"];XDXAVParseHandler *parseHandler = [[XDXAVParseHandler alloc] initWithPath:path];XDXVideoDecoder *videoDecoder = [[XDXVideoDecoder alloc] init];videoDecoder.delegate = self;// Origin file aac formatAudioStreamBasicDescription audioFormat = {.mSampleRate = 48000,.mFormatID = kAudioFormatMPEG4AAC,.mChannelsPerFrame = 2,.mFramesPerPacket = 1024,};XDXAduioDecoder *audioDecoder = [[XDXAduioDecoder alloc] initWithSourceFormat:audioFormatdestFormatID:kAudioFormatLinearPCMsampleRate:48000isUseHardwareDecode:YES];[parseHandler startParseWithCompletionHandler:^(BOOL isVideoFrame, BOOL isFinish, struct XDXParseVideoDataInfo *videoInfo, struct XDXParseAudioDataInfo *audioInfo) {if (isFinish) {[videoDecoder stopDecoder];[audioDecoder freeDecoder];dispatch_async(dispatch_get_main_queue(), ^{self.startWorkBtn.hidden = NO;});return;}if (isVideoFrame) {[videoDecoder startDecodeVideoData:videoInfo];}else {[audioDecoder decodeAudioWithSourceBuffer:audioInfo->datasourceBufferSize:audioInfo->dataSizecompleteHandler:^(AudioBufferList * _Nonnull destBufferList, UInt32 outputPackets, AudioStreamPacketDescription * _Nonnull outputPacketDescriptions) {// Put audio data from audio file into audio data queue[self addBufferToWorkQueueWithAudioData:destBufferList->mBuffers->mData size:destBufferList->mBuffers->mDataByteSize pts:audioInfo->pts];// control rateusleep(16.8*1000);}];}}];}- (void)getVideoDecodeDataCallback:(CMSampleBufferRef)sampleBuffer isFirstFrame:(BOOL)isFirstFrame {if (self.hasBFrame) {// Note : the first frame not need to sort.if (isFirstFrame) {CVPixelBufferRef pix = CMSampleBufferGetImageBuffer(sampleBuffer);[self.previewView displayPixelBuffer:pix];return;}[self.sortHandler addDataToLinkList:sampleBuffer];}else {CVPixelBufferRef pix = CMSampleBufferGetImageBuffer(sampleBuffer);[self.previewView displayPixelBuffer:pix];}}#pragma mark - Sort Callback- (void)getSortedVideoNode:(CMSampleBufferRef)sampleBuffer {int64_t pts = (int64_t)(CMTimeGetSeconds(CMSampleBufferGetPresentationTimeStamp(sampleBuffer)) * 1000);static int64_t lastpts = 0;// NSLog(@"Test marigin - %lld",pts - lastpts);lastpts = pts;[self.previewView displayPixelBuffer:CMSampleBufferGetImageBuffer(sampleBuffer)];}